UPDATE: After CityNews contacted Instagram and

following the publication of this story, Sarah Taylor’s Instagram

account has been reinstated as of April 15.

A Facebook company spokesperson provided the following statement:

“We want our policies to be inclusive and reflect all

identities, and we are constantly iterating to see how we can improve.

We remove content that violates our policies, and we train artificial

intelligence to proactively find potential violations. This technology

is not trained to remove content based on a person’s size, it is trained

to look for violating elements – such as visible genitalia or text

containing hate speech. The technology is not perfect and sometimes

makes mistakes, as it did in this case – we apologize for any harm

caused.”

What started out as an exciting Instagram post about a pregnancy

announcement for one Toronto woman, ended with her account being

deactivated due to what she fears is a biased algorithm that is facing

increased scrutiny.

Sarah Taylor, a plus-size model and personal trainer, posted a photo

of three running shoes beside a onesie and her baby’s sonogram. Soon

after, she said she received an alert from Instagram, telling her that

there was suspicious activity on her account and would therefore need to

go through a verification process to authenticate her page. Despite

doing so, she was told her account was being disabled for 24 hours.

“My page was gone, there was nothing there and it looked like I

didn’t exist,” said Taylor. “I wasn’t given a reason, I had never had

any community violations. To be shutdown with no warning at all and no

previous faults against my account, made no sense at all.”

The soon-to-be mom lost over 8,000 followers, over 90 per cent of

whom were women. She depends on her social media page for her

livelihood, keeping her Toronto business – a fitness studio that was

forced to shut down during the pandemic – running virtually.

After taking numerous steps to get her page back and following up

with the social media app to appeal the removal of her account, she was

told via email that her account was deactivated due to community

guidelines being violated. An allegation she disputes.

“I had no hate speech, no bullying, I am not nude on my photos and

mostly in fitness gear,” said Taylor. “All of my posts are all about

empowering women, it’s my life’s work to help women advocate for

themselves.”

More than two weeks later, Taylor still doesn’t know why her account

was deactivated, adding that she is unaware of whether or not she was

reported by someone else and if Instagram investigated prior to removing

her page.

“The fact that no one got back to me with details is really

disheartening as an influencer, as a business owner, and somebody who

owns a small business and is trying to survive during COVID,” Taylor

said. “I want them to give me actual reasons as to why it was shut down

in the first place because there was no cause for it. I want to see

change in the long run in algorithms. Stop filtering different groups if

they aren’t the typical beauty standard.”

CityNews reached out to Instagram last week to ask why Taylor’s account was removed but a response has not yet been provided.

The algorithm dilemma

Taylor took to her other Instagram page to bring attention to her

experiences and found that her story was just one of many that

highlighted issues surrounding Instagram’s algorithm, a set of

computerized rules and instructions used by the social media site.

“I discovered there were a couple other accounts that I know off who

talk about very similar topics as me that have been shut down, or have

had community violations, and have been shadow banned,” said Taylor.

“There are so many other things that have happened when it comes to

silencing the voices who are in marginalized bodies.”

For years now, a community of social media users have criticized

Instagram’s algorithms for being biased towards plus-size account

holders, and especially those from racialized communities.

Yuan Stevens, Policy Lead on Technology, Cybersecurity &

Democracy at Ryerson University, said a computer’s system rule, in this

case algorithms, can discriminate against persons.

One of the issues identified by Stevens is that algorithms are

assumed to be neutral and math-based, but the technology isn’t

impartial, and it’s made with “biases of their creators”. The biases

built into algorithms and automated technology are also reflective of

their databases and can therefore favour people who hold similar values

as the creators.

Stevens said that has significant implications for plus-sized people on social media.

“I’m not surprised that plus-sized models could be targeted on social

media apps like Instagram,” she said. “We know that automated decision

making algorithms like face recognition technologies can be extremely

inaccurate.”

Just recently, over 50 content creators who are plus-size signed up to participate in the ‘Don’t Delete My Body’ project,

calling on Instagram to ‘stop censoring fat bodies’ and that Queer and

BIPOC account holders are targeted at higher rates. The influencers, who

are from diverse backgrounds, posted photos with the caption “Why does

Instagram censor my body but not thin bodies?”

“There’s a bot in the algorithm and it measures the amount of

clothing to skin ratio and if there’s anything above 60 per cent, it’s

considered sexually explicit,” said Kayla Logan,

one of the creators of the project. “So if you’re fat and you’re in a

bathing suit, compared to your thin counterpart, that’s going to be

sexually explicit. It’s inherently fat phobic and discriminatory towards

fat people.”

Logan, who is a body positive and mental health content creator, adds

that this issue has persisted for years. That’s why dozens participated

in the project, taking photos of themselves in swimwear, lingerie, and

some posed semi-nude while covering parts of their bodies. Logan said

the photos taken for this project, are similar to what Instagram has

allowed other account holders to post without penalty.

“Instagram is doing everything they possibly can to silence you. They

will delete posts, they will flag your stories and remove them,” Logan

said. “Everyone shared their experience of censorship, especially on

Instagram. It’s not an isolated incident being fat and being silenced on

Instagram or losing your platform.”

Logan describes herself as a body-positive fat liberation activist

who has posted photos in lingerie posing next to iconic places around

the world. These algorithms have also impacted her account, locking her

out without any notice numerous times for posting content that’s similar

to non plus-sized accounts.

“I’m all about showing your body in very

artistic, beautiful, non sexualized ways. But on Instagram, fat bodies

are considered sexualized.”

Logan, claims she’s also been shadow-banned for years now, which is

the practice of restricting content and limiting an account’s reach

where photos and videos don’t appear on the explorer page. For social

media users who depend on these apps for business, that may mean losing

customers and opportunities. Logan also adds that her story views have

decreased by half and her branded content feature was removed, which

impacts her ability to do business with companies.

“I did have that confirmed by one of the largest companies in Canada,

when their IT department looked into it for me,” said Logan. “A company

wanted to put money in to a sponsored post we were doing and I didn’t

have that feature. I felt really embarrassed and ashamed and I had to

tell them that I believe I’m shadow-banned.”

“It’s like this hush thing in the community where us plus-size

influencers talk about it a lot but Instagram denies that it exists,”

Logan added.

Both Taylor and Logan have said that contacting Instagram has been

one of the biggest challenges, and there’s been a lack of transparency

and accountability, especially when they’re accused of violating

community rules and their content is repeatedly removed.

“There’s no human entity to speak with so we’re shouting into this

void,” said Logan. “They’re losing their community and their livelihood,

and even they can’t get a hold of Instagram. These are people that have

half-a-million viewership and they can’t have this conversation with

Instagram.”

“Start hiring people to actually look at things,” Taylor added. “If I

submit an appeal to my account, someone should be looking at it and

give me an actual answer rather than just a link with no details. It’s

unfair and something has to change. That’s my hope in speaking up.”

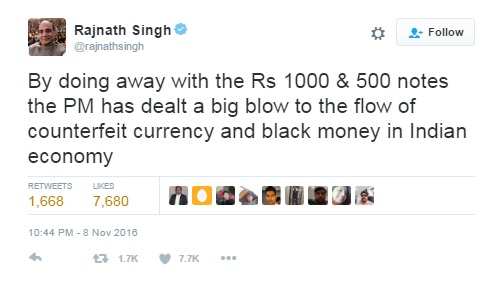

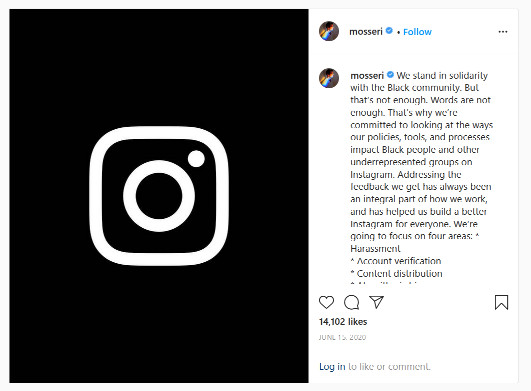

Criticism over Instagram’s algorithms started long before Taylor’s account was removed.

Last June, when the world saw mass protests, highlighting the death

of Black people by police officers and calling on governments and

institutions to address systemic racism, the head of Instagram made a

post standing in solidarity with the Black community.

Instagram CEO Adam Mosseri wrote then that the company will be doing a

better job at serving underrepresented groups on four areas, including

addressing algorithmic bias.

CityNews reached out to Facebook, which owns Instagram, to ask about

the issue of algorithm biases, shadow-banning, and how the company

investigates flagged accounts prior to removing them. A response has not

been yet been provided.

“This is a greater conversation, it’s not just about you shutting my

business page with no reason,” Taylor said. “I’m wondering if it’s a

bigger conversation about censorship. If that’s the case, I will

continue to be loud because that’s not okay.”

The algorithm debate

Algorithms used by social media sites have sparked big debates on not

only censorship, but the responsibility companies have in addressing

issues such as online hate, white supremacy, harassment and misogyny.

“It is worse for those who are Black, Indigenous and Asian because

they do get targeted even more and that’s not okay,” Taylor said. “It’s

very frustrating.”

Stevens said technology works in favour of some people and against

others because of its bias and potential for discrimination, adding that

Instagram’s algorithms aren’t perfect.

“Automated decision making technology is important because it speeds

up human decision making processes and allows decisions to be made at a

significant scale,” she explained. “Whereas Facebook is removing

content, historically it would have relied on a person to make that

decisions, automation would speed that process up and allow content to

be removed at an incredible scale and speed.”

Stevens is part of a team at Ryerson Labs, looking at face

recognition technology and how algorithms work. She cites the work of

Shoshana Zuboff, a scholar and leader in the field of “surveillance

capitalism,” saying algorithms play a role for social media companies

who are collecting data.

“These companies are in the business of understanding how we think

and work and nudging us in certain directions, and that’s really

significant,” said Stevens. “We expect to know how technology works but

algorithmic technology sometimes, it teaches itself because we feed it

data.”

Stevens and her team are hoping to highlight the work of Joy

Buolamwini, a computer scientist and digital activist who founded the

Algorithmic Justice League, focusing on creating equitable and

accountable technology.

As explained by Stevens, the organization has identified how face

recognition algorithms, which are being used by social media companies,

can often be inaccurate. Recently AGL analyzed 189 face algorithms

submitted by developers around the world and found concerning results.

“What they found was that the algorithms were 10

to 100 times more likely to inaccurately identify a photograph of a

Black or East Asian person compared to a white person,” said Stevens.

“What this means is that if you are in a data base and you are being

chosen for something or if they wanted to remove content or for some

reason target you in some way, the chances of you being misidentified

are so much greater if you’re East Asian or Black.”

Stevens adds that there needs to be more research in Canada that

looks at the use of algorithms and how decisions are made, not only on

social media, but also when it comes to policing.

Most of the research cited comes from the U.S., where there have been

instances of people being wrongly accused of committing a crime as

police services have also been known to use facial recognition

technology.

“There needs to be solutions,” argued Stevens. “Social media

companies are increasingly using algorithms and AI to make decisions.

Our work uncovered that in 2020 Facebook’s Community Standards

Enforcement report demonstrated that they’re continuously expanding

their use of algorithms to make content removal decisions.”

Attention has also turned to Canada’s privacy laws when it comes to

facial recognition technology. Stevens said it’s important that our

government’s laws advance to prevent what she calls “wrongful takedowns”

and instead, require social media companies to be more transparent

about how they make their decisions.

“People should understand how decisions are made. Right now companies

aren’t required to make these decisions transparent,” Stevens said.

“It’s incredibly important that companies are required by the Canadian

government to be open about how they decide how content is removed.

Right now, we don’t have that transparency.”

Unfairly targeted

Not having access to her account has resulted in a loss of business for Taylor, who was crowned Miss Plus Canada 2014.

Since the pandemic closed down her physical gym, she’s moved her

operations online and Instagram has become a key component to growing

her community.

The expectant mother also depends on social media as she works with

big brands like Nike, Lululemon and Penningtons. She’s created a

community with people from all around the world, which is why she’s

hoping Instagram will give her her page back.

“It wasn’t just a page, it was a community,” she said. “To lose that

makes me really sad, and it’s also disheartening that it happened after

announcing my pregnancy. I’ve definitely lost opportunities. It’s

basically slowed to a halt.”

Logan, who has nearly 38,000 followers on Instagram, adds that she

and others who have been unfairly targeted by algorithms have had to

create backup accounts just in case their pages are removed.

“I don’t know any thin bloggers who have a backup account in case

they lose their page,” Logan said. “Almost all of my friends I know, we

have backup accounts because we are terrified every single day that our

accounts will be gone. So just in case, we have that second platform.”

It’s been said for decades now, that society needs to do a better job

of being representative and inclusive of communities who haven’t always

had a platform for representation. The same can be said about social

media. Centering empowerment of other women who haven’t always seen

themselves reflected has been central for both Taylor and Logan.

“I grew up as a big kid, I was a size 12 and I was bullied heavily,

not just verbally. I was beat up by the guys in grade school,” Taylor

said. “It became my infernal dialogue and I grew up hating myself. It

affected all the decisions I made and led me to marry a man who was

abusive.”